|

Most emerging countries have versions of what is called a "Shanty town" or Ghetto. This is usually those living in poverty that does not have a proper home. We all know this creates a vicious cycle of abuse, crime and more poverty. We also know that in some locations that are in some ways affluent, the ability to build homes are limited which causes prices to skyrocket way more than they should. What if there is a way to build high quality homes while keeping the cost in check. Many of these poor people will not want a home that is considered "poor people house", They want to feel as a part of the main stream. There has been several initiatives to solve this problem including the $300 House initiative. They took the approach of building houses which involves the occupants doing most of the work and using a variety of local materials. Here comes 3D Printing This technology has been around for some time and has continued to mature as a main stream manufacturing technique. Now this is also being applied to housing. There are several companies that have actually developed working solutions as well. The main ones are Mighty Buildings and Icon Build. They have built real houses and continue to refine their processes to make this more affordable. What problem does a good home need to solve? 1. Comfort 2. Safety 3. Health 4. Self-esteem If these are covered per local situation, then it will be accepted over time. Some of the advantages with 3D printing are to reduce waste on building sites, leveraging new materials that could be more cost effective and a host of others.

Who will pay for this? The main reasons you have these "Shanty Towns" is that most of the occupants cannot afford to build or buy a regular home. On the flip side, developing economies have less resources to provide a home for every citizen or family. It's great to talk about new technologies but we also need to solve the problem of business models. How to make it good business? The first step is to get the cost down significantly without compromise on quality. Kind of like how the advent of cloud technologies significantly reduce the cost to use compute resources and provide many 'Software as a Service' solutions extremely cheap. Each location has its own local needs such as earthquakes, hurricanes, break ins etc. The second step is then to figure out payment structures. This could be done by the owners themselves or even the government providing some support. At least government may have lands they could provide to citizens. An example structure would be helping the future owner to gain an in demand skill which gets him/her employment to pay for the home. What are the next steps? Ultimately, at this stage it's all about education on what is possible. Get emerging countries governments to at least explore the relevant innovations and encourage adaption for local use - evangelizing and Incentivizing innovation. One has to take a long run perspective on these issues as that could actually justify the economics of making the appropriate investments. It may help to reduce crime, increase the supply of skill labor and improve quality of life for all. What you do think of this? Share your perspective. Most people take notes with a regular paper notepad or book and then transcribe into typed notes for a meeting and so on. How could we replace this with digital technology? The basic requirements here:

Some people are actually good at typing on a laptop very fast, so they have moved on from relying on the writing experience. Typical Writing Use Cases

There are other use cases but these jump out as the lion share of regular writing which will be explored with the various electronic writing options. Reusable Notebooks and Smartpens There are several solutions out there that can be considered a step up from the traditional paper Rocketbook - Allows the user to erase the writings and use a smartphone to copy and send the writings to the cloud - also has the ability to support writing to text conversion. Essentially, the notebook can be used many times over. Smartpens - These pens use specially designed notebooks (not reusable) but the pen would keep the details and transfer into the cloud wirelessly connecting to a phone or computer. Regular Tablets Most people would have an Ipad or Android (Samsung, Google etc.) tablet as they provide a lot of functions and applications. However, most will agree that even with a pen, writing on these will not give you the paper experience but maybe useful to take some quick notes or demonstrate some concepts. However for real writing these are not ideal. There are some workarounds on the market especially to add special screens which improves the experience. A popular one is paperlike for the Ipad. Some would complain that this will affect the viewing experience on the tablet while not really solving the ultimate paper experience. Hands down, tablets are great all round devices and provide lots of benefits but that paper writing experience is not there. The biggest disadvantage with these is that more functions also brings a lot more distractions. As tablets are here to stay; Is it time to move away from the hand writing approach to taking notes? E-ink or E-paper Tablets The concept of E-ink has changed the way we read books, especially popular with Amazon Kindles. However, there are several tablets built with this technology for writing purposes and several popular tablets in this space. The verdict is that these are very close to paper in respect to writing experience with an electronic implementation. Remarkable - This is a small company that builds this tablet primarily as a notetaker with the ability to read some ebook formats. It also allows marking up on books specially PDFs. There is big buzz on the Remarkable 2 coming out in September 2020 with an improved design. The complaints for these devices is the lack of advanced software features and low on device storage space. However, they get high marks in respect to the writing experience. The size of both generations is 10.3” which is a good sweet spot for portability. This is probable the best candidate for digital note taking out there. Onyx Boox Tablets - These are similar to the Remarkable in terms of being E-ink devices and they run Android as the main OS. The writing experience on the low end ones are not so great. The Max 3 seems to be the most genuine writer from this company but of course the size is 13.3” which is huge for every day portability. Sony DPT Family - Sony also has a platform which they sell directly as well as OEM to Fujitsu and Quirklogic. They are very similar to the Boox Max 3 especially in terms of some software features and writing experience. The experience on these are very good but the cost is fairly high running from $700 and up. The Papyr from Quirklogic seems to get very good marks for sharing the writing with others in real time as well as integration with Cloud services. There are some good options here and it seems the disparity is mostly around software features. The 13.3” tablets could be good for something to keep on a desk for planning and tracking things while the smaller ones are good for doing the regular everyday note taking. The common concern here is cost since they will not give the full functions of a regular tablet. Can one have a regular tablet and still get one of these? Conclusion The ideal here is to have a paper like experience in writing, translate the writing materials into readable text and be able to store and share electronically while being sustainable. Looking at the options presented, there seems to be some positives and some negatives about fully replacing the paper notebook while benefiting from new technologies. On the one hand, the E-Ink options are promising but also come at a very high price with limited functions. Traditional tablets have many functions but are very poor in terms of the writing experience. To keep with the traditional approach, the Rocketbook is a great contender as it keeps the writing feel and provides some technology improvements plus the cost is very affordable. While, it seems the E-inks may be the best choice overall with the potential for automation, it seems there is a gap especially around pricing with a truly electronic solution. Delivering something that keeps the traditional experience and will not break the bank. Ultimately, below $200. With all of those options it also comes down to the specific writing feel for each individual. What is your take on this? Have you used one of these devices? I have always have an interest to have one device which could be used in different ways. In the mean time we have different devices that excel of specific tasks. If you have to pick one device due to budget which one would it be? I would definitely keep a laptop for now but the Ipad with magic keyboard looks promising.

A great bootcamp will be held this summer for technology students in Jamaica. This will be conducted 100% virtually.

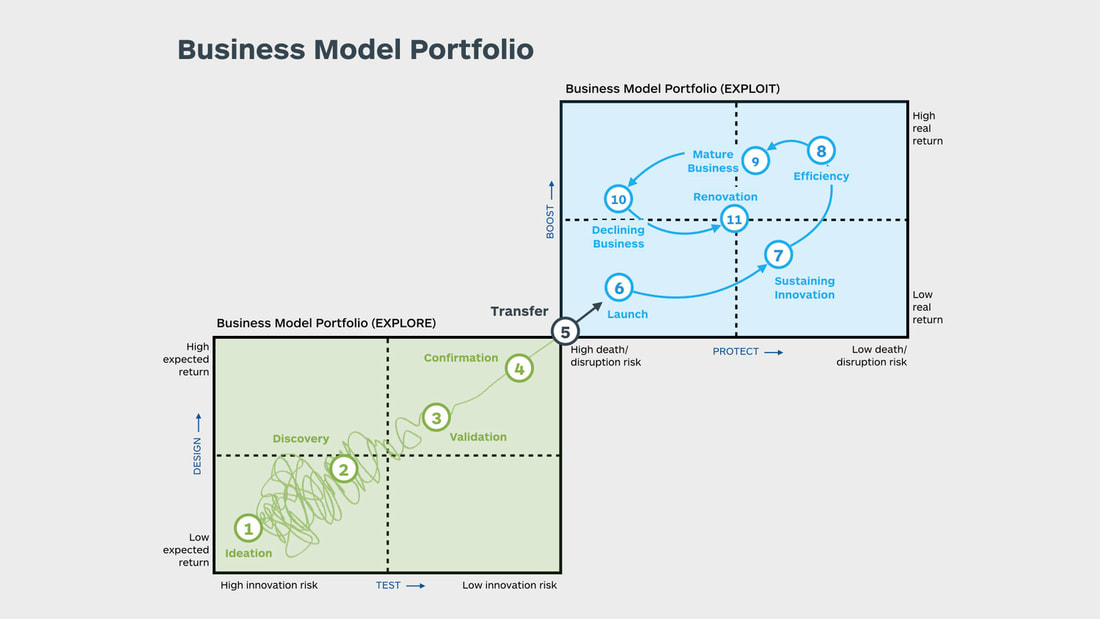

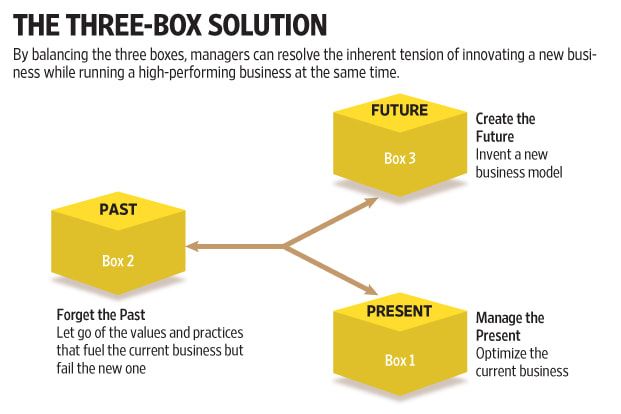

Get real-world developer training and hands-on experience to build Facebook Messenger Chatbots for conversational marketing and customer care solutions while preparing for employment. Attend online sessions in real-time via video conference from the comfort of your home or classroom while working with virtual team members to build innovative solutions. Learn more on Technology Bootcamp As we work through the pandemic, its important to understand the overall implications of each action and its over effect on the global system as whole. There is a lot of buzz about innovation and the need for companies to relevant to constant industry changes. How do you manage innovation? There are several approaches out there. The Strategyzer team came up with a concept to plan out an innovation portfolio which can be done continuously to stay on top of the market. This captured in the book The Invincible Company. Below is an example of the portfolio map used in this process. Another approach that is also useful is the 3-Box Solution. Where products are grouped into Past, current and future buckets. The concept is captured in the book - 3 Box Solution. There are many other books and articles on this topic but these are two options to start with.

There is always debates around the role of Product Owners versus those of Product Managers. Many present the idea that Product Managers are strategic while Product Owners are tactical, mainly focusing on writing users stories and managing the backlog of features per sprint. Is this true? looking at the responsibilities of Product Owners rom Scrum.org, There are two elements that stands out. 1. the Product Owner is accountable for the product and 2. he/she has ownership of the product. This means the individual would interact with customers and other stakeholders to define the vision of the product and then work with the scrum development teams to prioritize and deliver on this vision. While Scrum does not speak directly about strategy, marketing, sales and other activities, it is pretty obvious that the Product Owner would be involved in these roles as the accountable party for product success. One cannot properly manage a backlog and prioritize features if not being fully involved in the strategic intent of the product. Ultimately, it's fairly clear that Product Owners are bona fide Product Managers and in fact more. An example is given of ex-Apple CEO Steve Jobs as the ultimate Product Owner. The following video provides a good overview of the product owner role.. What is your take on this debate? feel free to share your comments openly..

Are you curious about using your phone as a regular computer? With the speed and specifications for Smartphones nowadays, technically, this is very possible. The question is; How do we realize this solution? Are the drivers for this (1) Economics, (2) Convenience and/or (3) consolidation? While the phone specifications have gone up, the price for Laptops have come down significantly, so one could easily just buy a low cost Chromebook to handle duties that require larger screens. However, there could be more to this situation. Take the Apple ecosystem for example; An iPhone is roughly $1000, the MacBook Air is another $1000 or more. If you buy both, one is looking at $2000 minimum in cost. However, if someone came up with a solution that sells an upgraded iPhone experience on a laptop for $300 that may be appealing. Of course, the argument could be made that an iPad could do the trick but a good iPad setup is about the same cost as a MacBook anyway. This is topic worth exploring more in the coming months. There are some good data points: 1. The Smartphone is a critical piece of equipment for pretty much everyone across all social spectrums - Which means its foundational. 2. Several concepts have actually gotten crowd funded on Kickstarter.com in recent times which implies there is a market looking for a suitable solution. The solutions vary from Laptop style to more of desktop type designs. A tablet is also something that has value but in the case of needing an essential solution; is it the most critical upgrade to a phone? The Samsung DEX is one of the mainstream solutions out there but of course it only works with Samsung Phones. How could this be widely available for pretty much any phone? Here is a promising foldable solution - cost about $55.00 The Nexjack Desktop solution is just $19 but only works with Samsung Phones at this time. Here is a video sample of how this could be done.. There are other ways but this kind of a desktop approach with the idea of it being portable. Other perspectives in Part 1 of this topic - http://www.larklandmorley.com/home/computing-with-a-single-device-especially-a-smartphone

There is clearly a lot of chatter around driverless cars or in fact flying cars? This technology is actually developing rapidly and Tesla has shown a lot of practical progress in this area. Where do you think this is headed? Feel free to comment here. |

RSS Feed

RSS Feed